Purchasing a microscope camera is one of the most daunting tasks you might have to undertake. Before you set out to buy that camera, carefully consider your applications. Things like sample brightness or the speed of the phenomenon you are trying to capture can dictate your choices. Also, this is the time to make peace with the fact that you might have to make compromises between different aspects of the camera’s performance to accommodate your experiment. For example, you may have to choose between camera sensitivity and speed of capture.

In this two-part article we point out the main considerations to take into account when buying a new camera. In this first part, we will help you decide between a color or monochrome camera. We will also help you calculate the pixel size your new camera should have to match your microscope.

1. Color or Monochrome Microscope Camera?

The single most important factor in choosing between a color or monochrome camera is the sample preparation. The rule of thumb? Use a monochrome camera for fluorescently-labeled samples and a color camera for bright-field imaging such as hematoxyline/eosin stained samples. If you image only histology or only fluorescent samples, the choice is pretty straightforward.

What if, however, you need to use your microscope for both fluorescent and bright field imaging? Colleagues or camera vendors will probably suggest a color camera, using the argument that a color camera might not be optimal for fluorescence, but it will get the job done. This is not entirely untrue: a color camera can acquire images of fluorescent samples. However it reduces the overall amount of collected light. This makes imaging low light samples difficult, if not impossible.

Put this article into practice

Choose a free resource to help you move forward

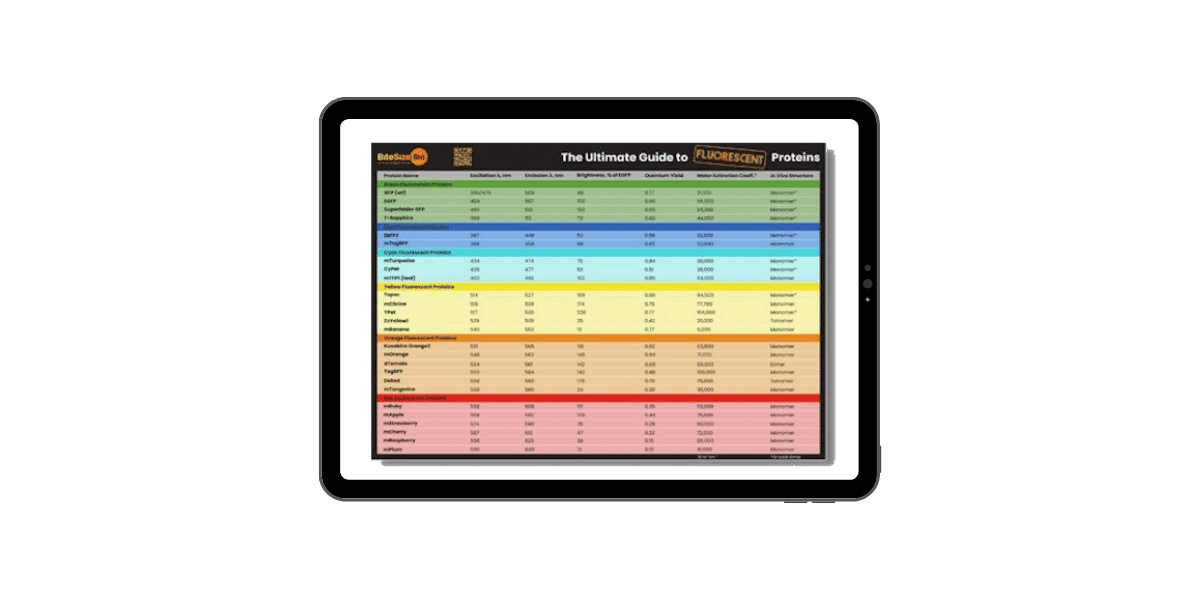

POSTER

Fluorescent Proteins Guide

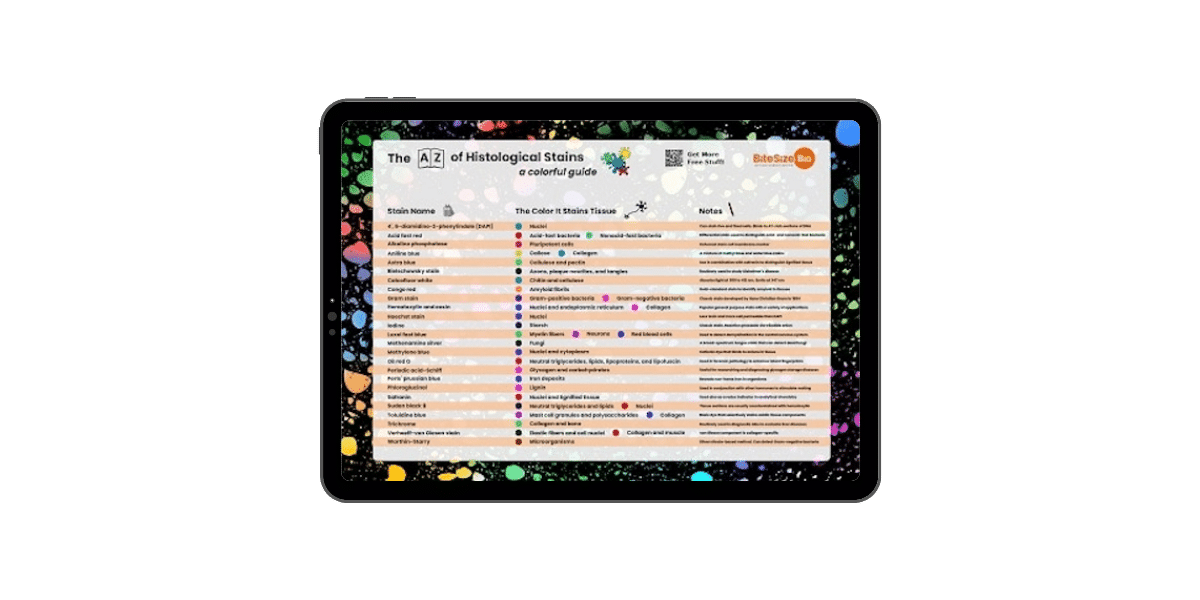

POSTER

Histological Stains Poster

Why Color Cameras Are Not a Suitable Choice for Fluorescent Imaging

Most color cameras use a filter, known as a Bayer filter, which is put over the camera’s detector (Figure 1). With this filter, only one in four pixels detect red light while the other three pixels in a quadrant block all red light. Same goes for blue and for green. In the case of green, however, two pixels out of four collect green light. The camera generates the colored image by interpolating the values for the pixels that contain no light information. For example, if a pixel lies between two red pixels, and the red has been blocked, the value for red light is interpolated and assigned to that pixel. Based on this, a color camera does not work well for dimmer samples, and does not deliver high quality fluorescent imaging even for bright samples.

With this in mind, it is safe to say that if you are planning to image histology samples as well as fluorescent samples, it is worth buying both a color and a monochrome camera. Before you do that, however, make sure your microscope has two available camera ports. If the microscope has only one camera port, you must come up with a viable plan to swap the cameras on the microscope based on the users’ needs.

2. Spatial Resolution and Sampling

If you want to take advantage of the maximum possible resolution of a microscope when imaging, you should match the resolution of the camera to that of the microscope. If the camera has pixels that are too large for the imaging system, it results in undersampling. This causes loss of resolution and artifacts (aliasing) in the final image. If the pixels are too small for the sample size, it results in oversampling creating unnecessarily large files and loss of light in the image. It is, therefore, critical to determine the correct pixel size of the camera.

Resolution: The Basics

The lateral resolution (d) of an imaging system is calculated by the Abbe equation: d=0.61?/NA where d is the lowest resolvable distance, ? is the wavelength of the light used to image and NA the numerical aperture of the lens. This equation assumes the imaging system is aberration-free. Even though this is not true, it provides the lower detection limit and simplifies the calculations below.

Taking into account the magnification of the imaging system (M), the minimum resolvable distance (Dr) between two features on the camera sensor is: Dr = 0.61?M/NA. Don’t forget : you need to use the total magnification (M) of the system and not of the objective lens alone. If the camera is connected to the microscope with a 0.5x adaptor, you need to account for this.

Think now of two adjacent spots that need to be resolved on the image captured by the camera. When those two spots are illuminated they generate a diffraction pattern known as the airy disk. To resolve the two spots, the central maxima of the two diffraction patterns (airy disks) should not overlap. The two points will barely be resolved when one central maximum falls onto the first minimum of the other diffraction pattern (the Rayleigh criterion; Figure 2) and the two spots will not be resolved at all if the two central maxima overlap.

Resolution and Pixel Size

To estimate the required pixel size of the camera to sufficiently sample the signal from the image, follow the Nyquist sampling theorem. According to the Nyquist theorem, the sampling frequency necessary for an accurate representation of the image requires a sampling frequency half of the resolution of the imaging system. In other words, the pixel size of the camera should be half the size of the projection of the minimum resolvable element in the imaging system. In the case of the camera detectors, however, which are comprised of an array of photodiodes with fixed size and shape (pixels), the geometry of the individual pixels (squares) dictates that to conform to the Nyquist sampling criterion, 2.5 to 3 pixels are required for each airy disk unit.

Putting all this together, your camera should have a pixel size of P=0.61?M/3NA or at least pretty close to that. Smaller than the optimal pixel size is usually less of a problem since it is possible to use binning (i.e. combining pixels on your sensor) through the acquisition software. You still get good resolution and improve the signal to noise ratio for the “super pixel” that is the result of the combined pixels. In terms of the different types of cameras available today, CMOS usually have the smallest pixel size compared to CCDs and EMCCDs. CCDs lie somewhere in the middle and the EMCCDs represent the larger pixel size. Of course pixel size is not the only difference among scientific cameras, but more on that on the second part of the article.

The Importance of the Sensor Size

Keep in mind that this is the individual pixel size on your sensor and does not tell you anything about the overall sensor size. The sensor is rectangle and its size, as well as the ratio of its dimensions, determine how much of the area of your sample will be captured by the camera. In microscopy it might be preferable to use a sensor with 1:1 dimension’s ratio, which captures more of the circular illuminated area of the sample (Figure 3).

If the camera you are considering has a small sensor and you wish to maximize the percentage of field of view you capture, you could use a demagnifying adaptor, i.e., 0.5x or 0.63x. But you will need to recalculate the pixel size to make sure you still match your camera to the resolution of your imaging system.

So now you should know whether you need a color or monochrome camera. You can also figure out the pixel size your camera should have to fully take advantage of the resolution of your imagine system. However, this is not all you have to consider. There is more to think about before you make your decision. Stay tuned for the second part of the article in which we discuss sensitivity, frame rate, signal to noise ratio among other things. Let’s make sure you invest your hard-earned grant money wisely.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.

Put this article into practice

Choose a free resource to help you move forward

EBOOK

Guide to Special Stains for Histology

POSTER

Fluorescent Proteins Guide