One of the best parts of R is how extensible it is. Over the years, the community has put together hundreds (thousands?) of amazing packages to make your workflow easier. The downside of this wealth is that it can be hard to find packages that do exactly what you want! Therefore, I’ve put together a list of my favorite packages in no particular order, grouped by their main function. Add your favorites to the comments!

Getting started

Stuck on how to start using these packages? Simply use the following code to install and load the package, or use the gui in R-Studio to do it for you.

Example: package is ggplot2

install.packages(‘ggplot2’) # only needs to be called once

library(ggplot2) # must be called each time you start a new R session

Packages for Visualization

ggplot2

There are many excellent visualization packages out there. However, my favorite, and one of the most popular, is the ‘grammar of graphics’ plot package: ggplot2. Using this package you can create stunning and complex graphs and plots.

gridExtra

The one downside to ggplot2 is that you can no longer use base graphics to set up multiple plots in one figure window [using par(mfrow=c(2,2)), for example]. Luckily, there is a way around this: with gridExtra, you can place multiple ggplot2 plots in a single figure in any configuration

Put this article into practice

Choose a free resource to help you move forward

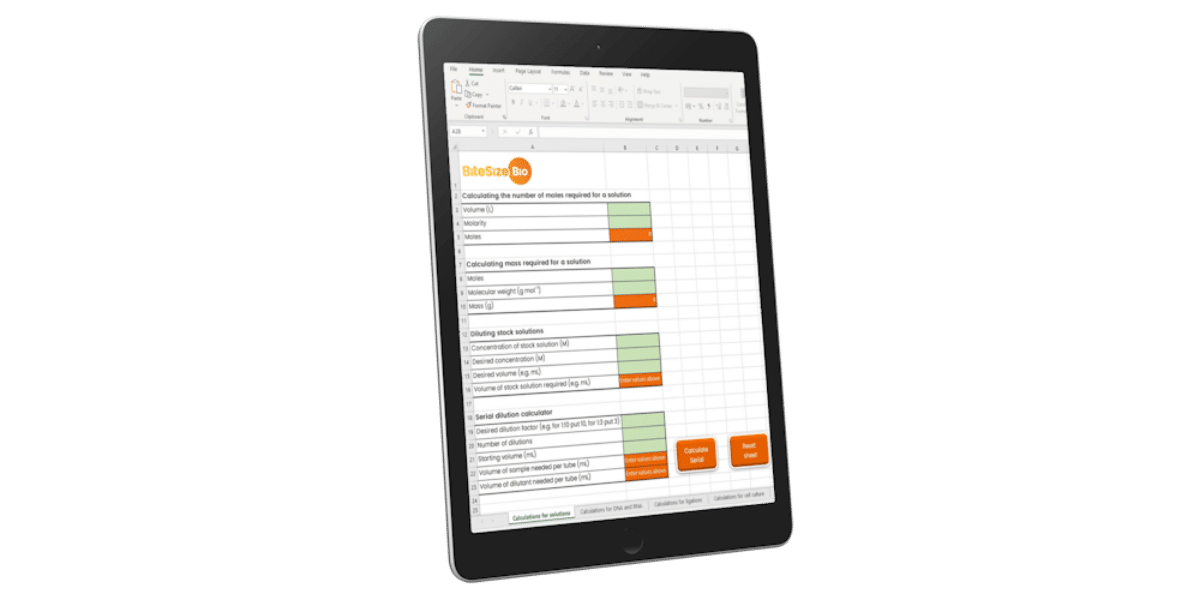

DIGITAL TOOL

Lab Math Calculator

CHEAT SHEET

Lab Math Cheat Sheet

Cairo

Once you’ve made your pretty graphics, you want to be able to save them to a format that retains that beauty, especially when you’re making publication-quality figures. I use Cairo to do that, which can convert your images to pdf, svg, eps, and basically any figure type you want with an easy-to-use syntax.

Statistical Packages

lme4 (or nlme)

I’m a bad scientist: I design experiments that require complicated statistics to properly analyze, mostly mixed-models that take into account hierarchical structure in my data (e.g. repeated measurements over time, or measuring multiple cells (subsamples) in a coverslip). I mostly use the excellent lme4 to create my mixed models. Others prefer nlme, however. Both are great, so go with the one whose syntax you prefer.

Forecast

Sometimes I work with time series data. When I do, I turn to the rich forecast package to help me analyze the series.

Zoo

While forecast has a lot of built-in, great time series functionality, sometimes I just need a great, easy rolling-mean, rolling-standard deviation, or similar. For these functions, I turn to the zoo package.

Spatstat

I’ve recently been learning how to analyze spatial distributions of my model organisms in different situations. For that, I use the spatstat (‘spatial statistics’) package.

Data Wrangling

dplyr

If you’re just starting to use R, you might be computing information the hard way—like I did, using loops. Then I did it the slightly-less-hard way, using fewer loops and apply commands. Finally, I saw the light and started using dplyr, which applies functions after splitting up your data however you wish, then combines it all again at the end. This can be a bit complicated for beginners but is very powerful and intuitive once you grasp apply commands and anonymous functions.

Bioinformatics

Bioconductor

Do you work with genomes or chip assays or arrays or flow? Then Bioconductor is what you’ll want to use to analyze your data. Bioconductor has a very active community, gets 2 updates a year and there is a wide range of resources available to help you get started such as the bioconductor courses.

Stringr

Perhaps I do it wrong, but I often keep my data in files in which the filename itself has important information relevant to the data. I may have to load 100s of these files into R as data.frames, and often want to parse information from the filename in the process. I use stringr to do this: stringr lets you do all sorts of useful things to strings, like find patterns.

Sharing

knitR

If you look at R blogs on the web, I bet you’ve seen some very nice ones that mix code, readable text, and output in very pretty ways. It turns out that there is an R package which makes this easy to do! knitR lets you make ‘R markdown’ files which combine real code, code results, and text with excellent formatting, which can be exported as webpages and slideshows. With knitR, you may not even have to use powerpoint!

Shiny

Sick of your boss asking you to re-run some analysis with different parameters? Wanting to show students how the shape of a function changes with different variables? Then shiny is for you. Shiny lets you put together interactive web applications that use R code and R graphics.

What’d I miss? Add your faves in the comments!

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.

Put this article into practice

Choose a free resource to help you move forward

CHEAT SHEET

Lab Math Cheat Sheet

DIGITAL TOOL

Lab Math Calculator