The first hurdle in learning about statistics is the language. It’s terrible to be reading about a particular statistical test and have to be looking up the meaning of every third word.

The type of data you have, the number of measurements, the range of your data values and how your data cluster are all described using statistical terms. To determine which type of statistical test is the best fit for analyzing your data, you first need to learn some statistics lingo.

Variables

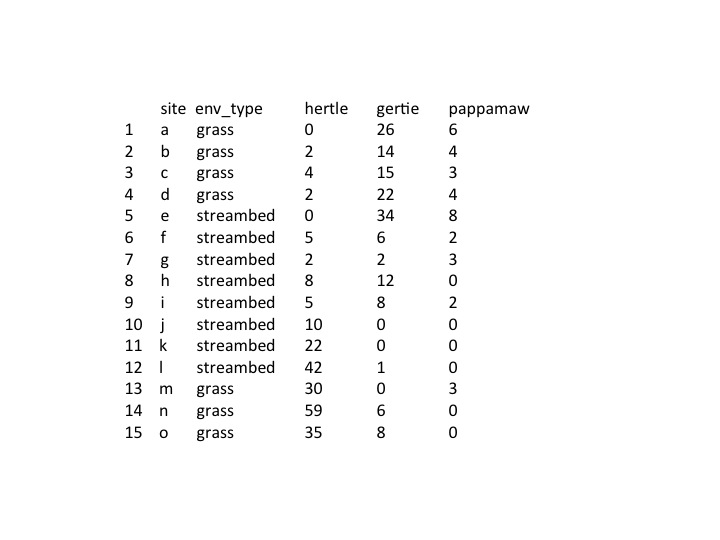

Variables are anything that can be measured; they are your data points, and the type you have affects the statistical test you use. Measurement or numerical variables are the main type of variables that are obtained in biological research, so I’ll focus on these.

Measurement variables can either be continuous, which means they can be any value between two points, (for example half and quarter measurements) while discrete variables are whole numbers (such as ranking 1-5). As I will show you later, some tests can only be used with continuous variables, while others can accommodate discrete values.

Put this article into practice

Choose a free resource to help you move forward

DIGITAL TOOL

Lab Math Calculator

CHEAT SHEET

Lab Math Cheat Sheet

Sample size (n)

Sample size refers to the number of data points in your set of data. In general, the larger your sample size, the better. However, factors such as time, cost and practicality limit the sample size you use. As an absolute minimum you need an n of 3 to perform a statistical test, but look at publications with similar experiments to determine what is considered acceptable. The size of your sample will affect the variance of your data (see below).

Data Spread

How spread out your data is gives you an idea of how reliable it is – data with low variance is more reliable than data with high variance. It is therefore useful to know how variable your data is, and there are several simple measures for determining this.

Variance

Variance is the simplest measure of the spread of the data and is the average of the squared differences of the mean. It tells us how spread out the data is from the mean; the larger the number the more spread out the data (higher variance). To calculate variance, first find the mean of the data points. Next, find the difference between each sample and the mean and then square the result. Finally, average the results of the squared differences.

Note: if you are calculating sample variance (which you most likely are, since this means you are measuring just a sample of a population rather than the entire population) then you divide by n-1 when finding the average of the squared differences rather than n. This is to correct for the fact that you are only estimating the variance (since you are not measuring the entire population) rather than accurately computing it.

Standard Deviation

The standard deviation (SD) is the most widely used method for measuring the spread of the data. SD is simply the square root of the variance and similarly tells us how much the samples deviate from the mean. The standard deviation is often preferred to the variance as it is produces figures in which the majority of the data is on the same scale, making the results easier to display.

Standard Error

Standard error (SE) and SD are often thought to be interchangeable, however this isn’t the case. While SD tells you about the variability of your data, SE provides information on the precision of the sample mean.

SE is calculated by dividing the variance by n and taking the square root of this number. Since SE is calculated by dividing the variance by the sample size, it decreases with increasing sample size. SE therefore is often quoted rather than the SD as it tends to produce small numbers due to the additional division step, but choosing it over the SD for this reason alone can be misleading.

Distribution

Distribution, as the name implies, describes how your data is distributed. There are many ways your data can be distributed and this can affect the statistical test you use. The most well-known distribution is of course the normal distribution, which has a bell shape. A normal distribution means the data is symmetrical, with values higher and lower than the mean equally likely, but the frequency of values drops off quickly the further away from the mean.

Non-normal distributions are skewed; the mean is usually not in the middle. Most statistical tests assume that the distribution is normal, but beware – many common statistical tests are not valid for highly skewed data.

p value

The p value is what you are searching for – the number that will tell you whether you have achieved the holy grail of science: statistical significance! It is generally considered that a result with a p value less than 0.05 is unlikely to have occurred by random chance and is therefore statistically significant. In contrast, results with a p value greater than 0.05 are not considered significant, as it cannot be ruled out that they did not occur by random chance.

The p value is affected by sample size and if your sample size is too small you will not obtain a significant result even if the observed effect is real. Therefore you need to ensure you have a suitable sample size.

Paired or unpaired data

One factor that will be important in determining which type of test to use is whether or not your data is paired. Paired data is derived from equivalent and matched populations. For example, if you are comparing two drugs and you give drug A to 10 people of a certain age and population one day and 10 people of the same age and population drug B another day, your data is matched and you can use a paired test. If 10 people are given drug A but 15 people given drug B, then your data is unpaired.

Parametric vs non-parametric test

A parametric test is used when the data is assumed to be of normal distribution and equal variance. In contrast, non-parametric tests make no assumptions about distribution or variance. In general, non-parametric tests are less powerful, but more conservative. Any significance you find with the test is probably more real.

Type 1 and Type 2 errors

A statistical test can give a false result – often when the wrong test is used or a test is used incorrectly. Two types of errors can be encountered.

A Type 1 error is a false positive. It is when you conclude that a result is statistically significant when in fact it isn’t. A Type 2 error is a false negative, it occurs when actual significance is missed.

Now that you have these terms in your repertoire, it is time to start talking statistical tests. In the next few posts I will talk about the different tests you can use depending on the type of data you have.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.

Put this article into practice

Choose a free resource to help you move forward

CHEAT SHEET

Lab Math Cheat Sheet

DIGITAL TOOL

Lab Math Calculator