Fortunately, those of us who have learned how to sequence know that aligning sequences is a lot easier and less time consuming than creating them. Whether you’re employing sequencing gels, Sanger-based methods, or the latest in pyrosequencing or ion torrent technologies, obtaining, manipulating and analyzing your sequences has never been easier.

We’re going to take a look at just the basics of sequence alignment to get you started.

How many sequences can I align?

You must have a minimum of 2 sequences to perform an alignment. For comparing 2 sequences you’ll need to perform a “pairwise” alignment. Most programs will align 3 or more sequences at a time and will require a different algorithm e.g. MUSCLE or one of the Clustal algorithms like ClustalW.

You can align several hundred to several thousand if you wish, but there are several factors that can make this straightforward and simple or a time hog if not impossible. First, you must choose an appropriate algorithm. For instance, the sequencing program MUSCLE can usually handle large data sets with a premium on accuracy. For some perspective, I can usually align ~750 sequences of 1000 nucleotides each in about an hour using MUSCLE. For aligning a large number of sequences, you must have sufficient computer memory and storage.

Enjoying this article? Get hard-won lab wisdom like this delivered to your inbox 3x a week.

Join over 65,000 fellow researchers saving time, reducing stress, and seeing their experiments succeed. Unsubscribe anytime.

Next issue goes out tomorrow; don’t miss it.

What is the difference between similarity and identity?

Identity is the degree of correlation between 2 un-gapped sequences, and indicates that the amino acids or nucleotides at a particular position are an exact match. Generally, an identity of 25% or higher suggests the potential for similarity of function; an identity of 18-25% implies similarity of structure or function. It is important to note that 2 or more completely unrelated sequences can have 20% identity or greater, so this is not a hard and fast rule. Similarity is the degree of resemblance between two sequences when they are compared, and indicates that the amino acids or nucleotides at a particular position have some properties in common (for instance, charge or hydrophobicity), but are not identical. A high percentage of similar residues can also suggest a conserved function or structure.

What is a “consensus” sequence?

A consensus sequence usually appears at the top of your alignment worktable, and each nucleotide (or amino acid) of the sequence is based on the residue that appears at that position most frequently in your aligned sequence. For instance, if you align 5 sequences, and the nucleotides at position 20 are A, A, T, A, and G, then the consensus sequence will have an A at position 20. The use of consensus sequences can be very useful when examining evolutionary relationships between sequences with high degrees of identity. It is also useful to use the consensus to identify potential gaps in your aligned sequences.

Why are gaps important?

A gap is one or more spaces in a single string of a given alignment and usually corresponds to an insertion or deletion in one or more sequences within the alignment. The insertion or deletion can be an artifact of sequencing chemistry and not indicative of the authentic DNA sequence. According to the European Bioinformatics Institute, there are several other potential explanations for:

- A single mutation can create a gap (very common).

- Unequal crossover in meiosis can lead to insertion or deletion of strings of bases.

- DNA slippage in the replication procedure can result in the repetition of a string.

- Retrovirus insertions.

- Translocations of DNA between chromosomes.

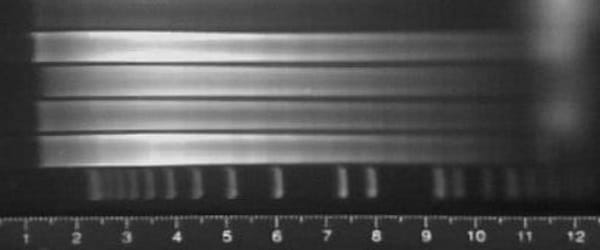

How do I know my sequence data is good?

Alphabet soup. Lots of As, Ts, Cs and Gs. Regardless of your methods to obtain your sequences, the overall success and accuracy of your sequence alignments and subsequent analyses depend entirely on the quality of your sequence data. Things like solid upstream preparations, primer design and reagent quality can make you a hero….or a zero. So, in scientific terms, the quality of sequence data is directly proportional to the success and robustness of your alignments, you know, ‘garbage in – garbage out’.

In most cases, your raw data is “scored” and cleaned up by the sequencer software resulting in your finished, exportable sequence. Quality can be scored many different ways, depending on the technology and chemistry used, and utilizes criteria such as signal strength, number of contiguous nucleotides read and the ease with which each nucleotide is determined, e.g. a clean, unobstructed peak in a chromatograph. Other than As, Ts, Cs and Gs, it’s important to understand other codes that may appear in your data (click here for the complete list of IUPAC codes).

Some helpful tips

- I would highly recommend getting in the habit of saving your work early and often!

- Get used to FASTA file formats – you’ll need these when downloading from clearing houses like GenBank (https://www.ncbi.nlm.nih.gov/BLAST/blastcgihelp.shtml)

- In general, if you’re aligning sequences with LTR (long terminal repeats) regions, you might try deleting these regions as long as they are all identical in composition and length – this will speed up your alignments without sacrificing accuracy.

- The longer your sequences, the longer the time required.

Stay tuned for the next article in this series, in which we’ll talk about the different sequencing alignment programs that are available.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.