This column is loaded with pop quizzes for you to test yourself on. If you haven’t already done so, catch up on yesterday’s piece on hypothesis testing for a refresher.

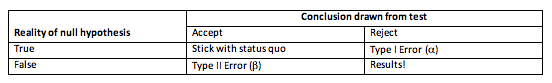

Take a gander at the table below for a summary of the two types of error that can result from hypothesis testing.

Type I Errors occur when we reject a null hypothesis that is actually true; the probability of this occurring is denoted by alpha (a).

Type II Errors are when we accept a null hypothesis that is actually false; its probability is called beta (b). As you can see from the below table, the other two options are to accept a true null hypothesis, or to reject a false null hypothesis. Both of these are correct, though one is far more exciting than the other (and probably easier to get published).

Enjoying this article? Get hard-won lab wisdom like this delivered to your inbox 3x a week.

Join over 65,000 fellow researchers saving time, reducing stress, and seeing their experiments succeed. Unsubscribe anytime.

Next issue goes out tomorrow; don’t miss it.

It all looks really simple (I hope) when you put it in a table like that. The trouble is we don’t know whether the null hypothesis is true or not; that’s the whole point of statistics! So instead we are reliant on the probabilities of each type of error occurring. As in life, nothing is ever easy, so in statistics we cannot minimise the probability of both errors simultaneously. By reducing the probability of Type I Errors, we automatically increase the probability of a Type II Error occurring, and vice versa.

Pop Quiz: Given the conundrum, which type of error do we focus on minimising? You’ve got the next three paragraphs to come up with your answer (no looking at your neighbours, no texting, papers face-down on your desk when you’re finished).

For a working example I’ll depart from biology for a moment and move to medicine. Pharmaceutical Company Delta-Theta has manufactured a new pill they claim relieves headaches. As all good pharmaceutical companies do they have conducted a double-blind study* to test the effects of their pill.

Pop Quiz: Following on from last week, you should be able to tell me the null and alternate hypotheses (Go!).

The null hypothesis is that the medicine does not relieve headaches (or, that it does no better than a placebo pill). So the alternate states that the pill does relieve headaches, at least in comparison to a placebo.

Pop Quiz:What then, would constitute a Type I and Type II error?

A Type I Error refers to rejecting a true null hypothesis; so this would mean that in truth the pill does not relieve headaches, but the pharmaceutical company concludes that it does. Whereas a Type II Error occurs when we accept a false null hypothesis; the pill actually does relieve headaches, but Delta-Theta concludes that it doesn’t. [If you’re still getting your head around which error is which, don’t worry – I still needed to check my table to make sure I had them the right way around].

So, finally we can return to the question I posed at the start of this article: which type of error do we focus on minimising? The reason I’ve used a medical example is because of the hefty weight attached to right or wrong decisions. A Type II Error would falsely conclude that the pill does no good, and so it wouldn’t be put into the market, resulting in a loss for the company. However, a Type I Error concludes that the pill does work, when actually it doesn’t. The pill gets put into production and enters the market; patients take it and are not relieved from headaches. Loss for the consumer.

But what if the medicine wasn’t for headaches? What if it was for HIV, or cancer, or diabetes? What if a patient stopped taking their regular medication in order to take this new pill? Hence the hefty cost of a wrong decision.

Thus, we aim to minimise the probability of a Type I Error occurring, at the cost of Type II errors. The same argument stands for publishing biological results. Which is more embarrassing and career damaging, publishing incorrect results (Type I) or failing to recognise and publish significant results?

Biologists have reached a consensus on what probability of a Type I error we are prepared to accept; the magical 5 % threshold. This leads to a discussion of p-values, our next subject.

Until then, you are very welcome to leave your comments and feedback on the statistics series thus far.

*A double-blind study is where neither the patient nor the doctor knows whether the actual drug or a placebo has been administered. The purpose being to negate any change in the doctor’s behaviour if he or she knows which the patient has been allocated, e.g. by giving less attention to patients on the placebo. I highly recommend pretty much anything Ben Goldacre has written if you wish to delve further into this fascinating subject of human psychology and medical testing.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.