Next Generation DNA Sequencing (NGS) is a revolutionary new technology that provides biologists and medical scientists with the ability to collect massive amounts of DNA sequence data both rapidly and cheaply. This technology is having a huge impact on many aspects of biology and medicine because it can be applied in so many different ways. DNA can be sequenced from a new species, from individual patients, cells in tissue culture, bacterial strains, or a mixed collection of DNA molecules obtained from any environment or location on (or in) the human body (Metagenomics). DNA sequencing can be used as an assay which reads out sequence information as a proxy for locations on the genome where interesting things are happening – such as the binding of transcription factors, epigenetic modifications of DNA and histone proteins, 3-dimensional folding of chromosomes, etc. DNA sequencing can also be used as a quantitative measure of gene expression by converting mRNA to cDNA and counting the number of transcripts observed from each gene. For this NGS Channel, we will define NGS as a technology which simultaneously determines the sequence of DNA bases from many thousands (or millions) of DNA templates in a single biochemical reaction volume.

The only way is (scaling) up!

Prior to NGS, DNA was sequenced one molecule at a time using technology developed by Frederick Sanger in 1975 (Sanger & Coulson 1975). The Sanger method requires a substantial quantity of pure template DNA (tens to hundreds of nanograms) cloned into a vector that provides a sequencing primer. The sequencing reaction copies the template molecules using DNA polymerase enzyme, adding nucleotides that carry a label. Originally, a radioactive label was used, and a separate reaction was conducted for each of the four DNA bases, then the labeled fragments were separated by size using electrophoresis in a polyacrylamide gel. This system was improved in the late 1980’s by Leroy Hood, Michael Hunkapiller and others working with the Applied Biosystems company (ABI). The ABI system makes use of four different fluorescent labels- one for each of the DNA bases. This allows all four reactions to be combined and the products detected automatically by a fluorescent detector connected to a computer. However, the ABI system still used pure cloned template molecules, with one sequencing reaction and one lane of electrophoresis for each template. This system was used for the original Human Genome Project (from 1995-2001), and the only way to scale up was to gather more and more ABI sequencing machines in very large sequencing factories.

The first true NGS machine

This was produced by the 454 Life Sciences Corporation in 2003, with which they sequenced the entire genome of adenovirus (30,000 bases) in a single day (Pollack 2003). The initial 454 Genome Sequencer 20 (GS20) product, offered commercially in 2005, produced approximately 25 million bases of DNA sequence per run, with reads 80-120 bases long. This represented a ten-fold improvement over the best Sanger-based system from Applied Biosystems. Since that time, many other companies have entered the NGS market, which is currently dominated by Illumina. The overall trend has been an astonishing growth curve in data production (per machine per day) matched by an equally sharp drop in the cost per base of sequence information obtained.

The differences and similarities in current NGS machines

There are a number of companies currently producing high throughput DNA sequencing machines each operating with significant differences in their underlying technology, as well as the types and quantities of sequence information produced. The specifics of sample preparation, signal amplification, attachment of templates to the reaction surface, the biochemistry of the actual sequencing reaction, and the method of data collection (and signal processing) are specific to each vendor of NGS equipment. However, the features that they have in common include cutting or shearing the template DNA sample into small fragments, attaching specific primers to the ends of the fragments, attaching the fragments to a solid support, making copies of each template molecule (clusters), delivery of sequencing reagents by microfluidics, and signal detection for each base added to each cluster. Each template molecule is affixed to a solid surface in a spatially separate location, and then clonally amplified to increase signal strength. The use of many independent single molecule templates allows for the detection and quantitation of rare variant sequences in a mixed population and eliminates problems with cloning bias that previously made some DNA fragments difficult to sequence.

Put this article into practice

Choose a free resource to help you move forward

EBOOK

Gene Editing 101

DOWNLOAD

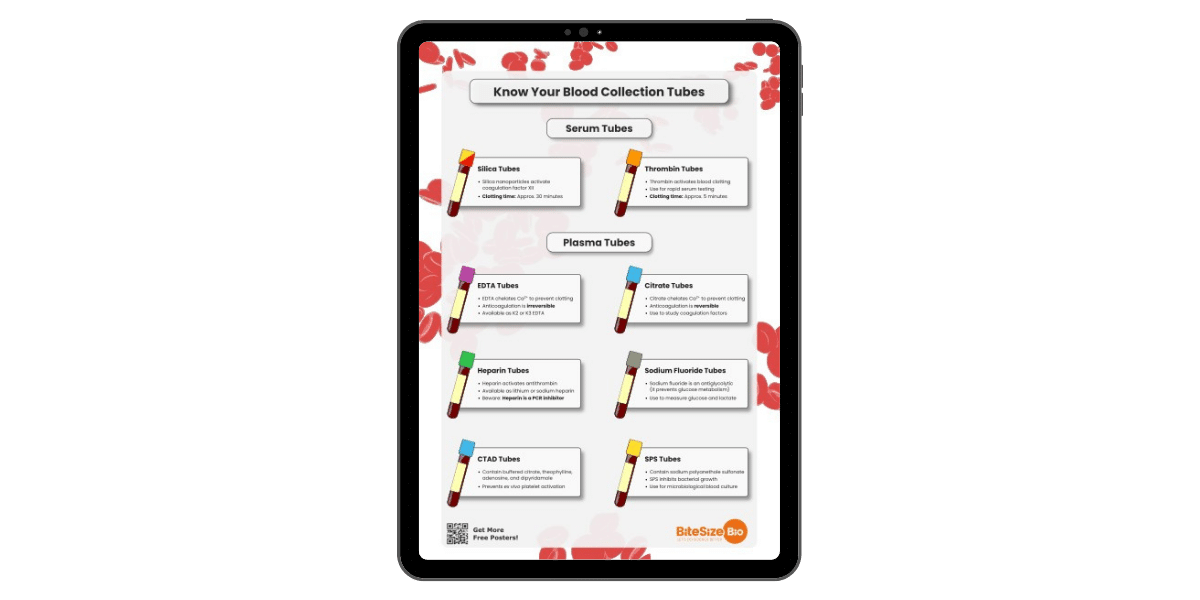

Blood Collection Tube Chart

Cheaper by the megabase

Over the past five years, the cost per base has fallen by half every five months. This is more than three times faster than the rate of improvement in computer processors, which double in speed every 18 months (Moore’s Law). As a result, sequencing centers are spending an increasing fraction of their budget on computing hardware to process and store NGS data. The cost of DNA sequencing has already dropped to the point that it is now economically feasible to sequence the entire genome of patients who are suspected of suffering from a genetic disorder. Cancer is also a genetic disease, and patients may benefit from sequencing of tumors. Currently, the primary obstacle to widespread clinical use of DNA sequencing technology is not the cost of sequencing, but the analysis, interpretation, and fundamental medical knowledge to make use of the data in a medically useful manner.

NGS in the clinic

Clinical sequencing is usually focused on detecting mutations, but we don’t even have a functional definition of a mutation! Every human differs in their genome sequence from every other (unrelated) human by about 0.1% (one base per thousand). So for a 3.2 billion base genome (or 6.4 billion bases, if you consider that we have two copies of every chromosome, one from each parent), everyone has more than 3 million sequence differences (variants) compared to anyone else, or compared to the standard reference genome maintained by GenBank (https://www.ncbi.nlm.nih.gov/assembly/368578). Many of these sequence variants are common alleles in the general population, and many of them have no known functional effect. Some have been associated with disease with varying degrees of certainty. The overall medical interpretation of a complete genome sequence is really beyond our current capabilities.

More technology than we know what to do with

There is also the matter of accuracy and certification. The technology has grown so rapidly (and is continuing to develop at a such a frantic pace), that it is impossible for regulators to establish standards. Current NGS technologies (such as the Illumina HiSeq 2000 or the Complete Genomics contract sequencing service), can produce a complete human genome sequence in about a week for around $10,000. However, each individual sequence read has about a 0.5% error rate. Greater accuracy is achieved by overlapping many sequence reads and applying a variety of bioinformatics algorithms, which vary from lab to lab. Clinical sequencing is usually focused on detecting mutations, but there are no universal benchmark standards to establish accuracy with respect to false positive and false negative rates for each type of sequencing machine, each lab, each reagent kit and sample preparation protocol. So at the moment, NGS technology has grown so rapidly that it has outstripped our ability to make effective use of it in medicine.

Stay tuned to this Channel as, next week, James Hadfield will expand on the different technologies from each of the three main NGS companies as well as introducing Methods and Applications!

References

Pollack A. 2003. Company Says It Mapped Genes of Virus in One Day. NY Times, Aug. 22.

Sanger F, Coulson AR. 1975. A rapid method for determining sequences in DNA by primed synthesis with DNA polymerase. J Mol Biol 94 (3): 441–448.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.

Put this article into practice

Choose a free resource to help you move forward

EBOOK

Gene Editing 101

DOWNLOAD

Blood Collection Tube Chart