- A promising study on using gene expression to develop personalized treatments for ovarian cancer.

- A report of surprisingly high levels of differential gene expression among different ethnic groups.

- The announcement of previously unsuspected levels of physiological diversity in Plasmodium falciparum, the parasite that causes the most deadly form of malaria.

What do these three seemingly disparate studies have in common? After publication, the high-profile findings in each one were questioned due to the presence of batch effects. Batch effects are ever-present and insidious in science, so as researchers we need to always be on guard against them. Keep reading for a run-down of how they can ruin next gen studies—and what you can do to prevent this from happening to you.

What are batch effects?

Batch effects occur whenever external factors associated with labwork influence the outcomes you are measuring in a study. Although next gen sequencing doesn’t appear to be as sensitive to batch effects as some other technologies—such as microarrays—they can still throw a wrench in your findings.

A great example comes from the 1,000 Genomes Project, which relied on Solexa sequencing. Armed with data from nearly 3,000 genomes, a group of researchers decided to ask what sort of effect the date of sequencing had on the genetic variability identified among the sequences. They found that only 17% of sequence variability was associated with the biological differences being studied, whereas a whopping 32% could be explained by the date that the samples were run on. Eek!

Of course, the date on which a sample is run is only one factor contributing to batch effects. A switch in the technician gathering the data, differences between laboratories or even chips, changes in reagents, and alterations to the methods employed can all create unwanted variability due to batch as well.

Put this article into practice

Choose a free resource to help you move forward

EBOOK

Gene Editing 101

DOWNLOAD

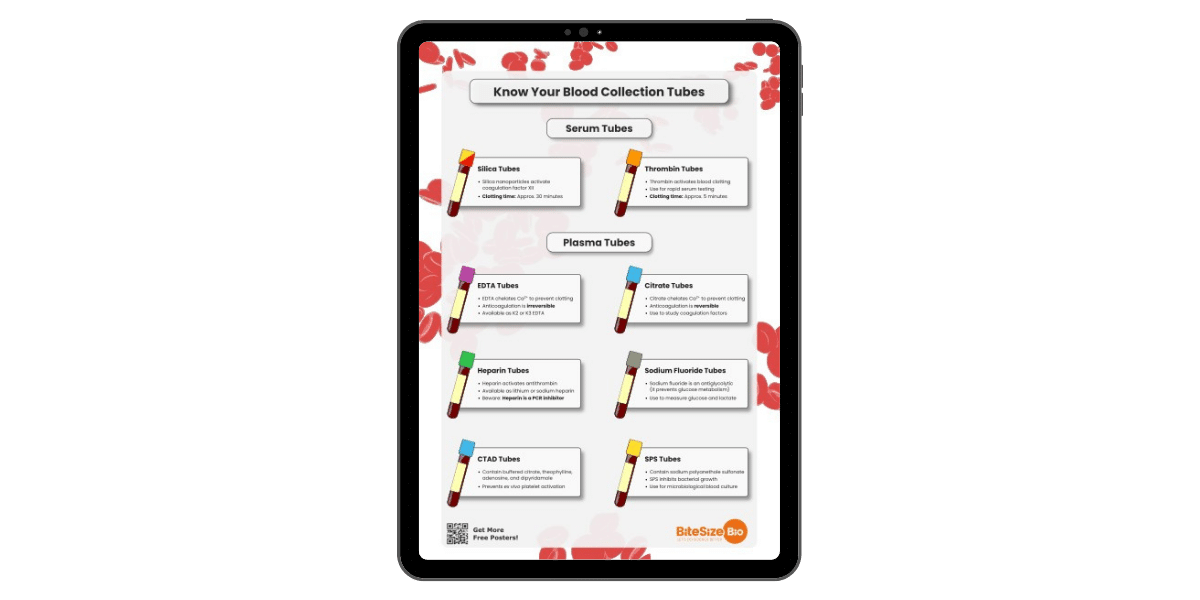

Blood Collection Tube Chart

Why are batch effects so dangerous?

Batch effects ruin studies in two ways;

The first possibility is probably the most common. Variability related to batch gets introduced into your dataset, and this makes it more difficult to identify the signal you are actually interested in: the one related to biological variation. Sure, an interesting finding might be lurking inside your data, but you may not be able to detect it over all the noise. No fun, right?

However it can get worse. In scenario number two, there is confounding between batch and your variable of interest. In this case, the variability in your dataset due to batch and the variability due to whatever you’re studying are so intertwined that you can’t separate the two. Best-case scenario, you recognize the problem and have to throw out your data. Worst-case scenario, you don’t realize in time and publish spurious findings. You don’t want either of these things to happen to you.

How do I guard against batch effects?

Unfortunately, we can never rid our studies of batch effects. Because we can never measure each batch of samples in exactly the same way, batch-related differences will always be around. We can, however, take steps to minimize the effect they have on our data.

– The first step is a simple one, and it’s probably something you are doing already. You can do your best to ensure that differences in sample handling are minimal. This will cut down on the variation in your data attributed to batch.

– The second step is also simple, but failure to complete it has created big headaches for many researchers: be sure to randomize the order in which your samples are sequenced. This will ensure that you don’t end up with confounding between batch and your variable of interest. Randomizing will give you confidence that your findings reflect differences between the samples themselves and not differences in the way they were processed.

– The third and last step is to adjust for batch at the data analysis stage. Keeping good notes on how and when samples were run can aid you here, as this information can be incorporated into your statistical analysis. Statistical methods for adjusting for batch effects range from the very simple (generalized linear models including batch-related variables) to the very sophisticated. Before despairing there is nothing interesting in your data, be sure that you have explored adjusting for batch effects. They may be masking the signal you are trying to find!

So take heart. Though batch effects will always be lurking in your next gen data, by simply being aware of them you can take simple steps to combat them and let your data shine.

You made it to the end—nice work! If you’re the kind of scientist who likes figuring things out without wasting half a day on trial and error, you’ll love our newsletter. Get 3 quick reads a week, packed with hard-won lab wisdom. Join FREE here.

Put this article into practice

Choose a free resource to help you move forward

EBOOK

Gene Editing 101

DOWNLOAD

Blood Collection Tube Chart